select with window function (dense_rank()) in SparkSQL(在 SparkSQL 中使用窗口函数 (dense_rank()) 进行选择)

问题描述

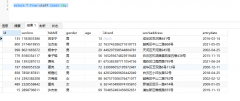

我有一个包含客户购买记录的表格,我需要指定购买是在特定日期时间窗口内进行的,一个窗口是 8 天,所以如果我今天购买了 5 天内购买了一次,那么如果窗口号是我的购买1,但如果我在今天的第一天和 8 天后的第二天这样做,第一次购买将在窗口 1 中,最后一次购买将在窗口 2 中

I have a table which contains records for customer purchases, I need to specify that purchase was made in specific datetime window one window is 8 days , so if I had purchase today and one in 5 days its mean my purchase if window number 1, but if I did it on day one today and next in 8 days, first purchase will be in window 1 and the last purchase in window 2

create temporary table transactions

(client_id int,

transaction_ts datetime,

store_id int)

insert into transactions values

(1,'2018-06-01 12:17:37', 1),

(1,'2018-06-02 13:17:37', 2),

(1,'2018-06-03 14:17:37', 3),

(1,'2018-06-09 10:17:37', 2),

(2,'2018-06-02 10:17:37', 1),

(2,'2018-06-02 13:17:37', 2),

(2,'2018-06-08 14:19:37', 3),

(2,'2018-06-16 13:17:37', 2),

(2,'2018-06-17 14:17:37', 3)

窗口是8天,问题是我不明白如何指定dense_rank() OVER (PARTITION BY)查看日期时间并在8天内制作一个窗口,结果我需要这样的东西

the window is 8 days, the problem is I don't understand how to specify for dense_rank() OVER (PARTITION BY) to look at datetime and make a window in 8 days, as result I need something like this

1,'2018-06-01 12:17:37', 1,1

1,'2018-06-02 13:17:37', 2,1

1,'2018-06-03 14:17:37', 3,1

1,'2018-06-09 10:17:37', 2,2

2,'2018-06-02 10:17:37', 1,1

2,'2018-06-02 13:17:37', 2,1

2,'2018-06-08 14:19:37', 3,2

2,'2018-06-16 13:17:37', 2,3

2,'2018-06-17 14:17:37', 3,3

知道如何获得它吗?我可以在 Mysql 或 Spark SQL 中运行它,但 Mysql 不支持分区.还是找不到解决办法!任何帮助

any idea how to get it? I can run it in Mysql or Spark SQL, but Mysql doesn't support partition. Still cannot find solution! any help

推荐答案

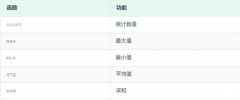

很可能你可以在 Spark SQL 中使用时间和分区窗口函数来解决这个问题:

Most likely you may solve this in Spark SQL using time and partition window functions:

val purchases = Seq((1,"2018-06-01 12:17:37", 1), (1,"2018-06-02 13:17:37", 2), (1,"2018-06-03 14:17:37", 3), (1,"2018-06-09 10:17:37", 2), (2,"2018-06-02 10:17:37", 1), (2,"2018-06-02 13:17:37", 2), (2,"2018-06-08 14:19:37", 3), (2,"2018-06-16 13:17:37", 2), (2,"2018-06-17 14:17:37", 3)).toDF("client_id", "transaction_ts", "store_id")

purchases.show(false)

+---------+-------------------+--------+

|client_id|transaction_ts |store_id|

+---------+-------------------+--------+

|1 |2018-06-01 12:17:37|1 |

|1 |2018-06-02 13:17:37|2 |

|1 |2018-06-03 14:17:37|3 |

|1 |2018-06-09 10:17:37|2 |

|2 |2018-06-02 10:17:37|1 |

|2 |2018-06-02 13:17:37|2 |

|2 |2018-06-08 14:19:37|3 |

|2 |2018-06-16 13:17:37|2 |

|2 |2018-06-17 14:17:37|3 |

+---------+-------------------+--------+

val groupedByTimeWindow = purchases.groupBy($"client_id", window($"transaction_ts", "8 days")).agg(collect_list("transaction_ts").as("transaction_tss"), collect_list("store_id").as("store_ids"))

val withWindowNumber = groupedByTimeWindow.withColumn("window_number", row_number().over(windowByClient))

withWindowNumber.orderBy("client_id", "window.start").show(false)

+---------+---------------------------------------------+---------------------------------------------------------------+---------+-------------+

|client_id|window |transaction_tss |store_ids|window_number|

+---------+---------------------------------------------+---------------------------------------------------------------+---------+-------------+

|1 |[2018-05-28 17:00:00.0,2018-06-05 17:00:00.0]|[2018-06-01 12:17:37, 2018-06-02 13:17:37, 2018-06-03 14:17:37]|[1, 2, 3]|1 |

|1 |[2018-06-05 17:00:00.0,2018-06-13 17:00:00.0]|[2018-06-09 10:17:37] |[2] |2 |

|2 |[2018-05-28 17:00:00.0,2018-06-05 17:00:00.0]|[2018-06-02 10:17:37, 2018-06-02 13:17:37] |[1, 2] |1 |

|2 |[2018-06-05 17:00:00.0,2018-06-13 17:00:00.0]|[2018-06-08 14:19:37] |[3] |2 |

|2 |[2018-06-13 17:00:00.0,2018-06-21 17:00:00.0]|[2018-06-16 13:17:37, 2018-06-17 14:17:37] |[2, 3] |3 |

+---------+---------------------------------------------+---------------------------------------------------------------+---------+-------------+

如果需要,您可以explode 列出 store_ids 或 transaction_tss 中的元素.

If you need, you may explode list elements from store_ids or transaction_tss.

希望能帮到你!

这篇关于在 SparkSQL 中使用窗口函数 (dense_rank()) 进行选择的文章就介绍到这了,希望我们推荐的答案对大家有所帮助,也希望大家多多支持编程学习网!

本文标题为:在 SparkSQL 中使用窗口函数 (dense_rank()) 进行选择

基础教程推荐

- while 在触发器内循环以遍历 sql 中表的所有列 2022-01-01

- ORA-01830:日期格式图片在转换整个输入字符串之前结束/选择日期查询的总和 2021-01-01

- MySQL根据从其他列分组的值,对两列之间的值进行求和 2022-01-01

- 带更新的 sqlite CTE 2022-01-01

- 使用 VBS 和注册表来确定安装了哪个版本和 32 位 2021-01-01

- CHECKSUM 和 CHECKSUM_AGG:算法是什么? 2021-01-01

- 如何在 CakePHP 3 中实现 INSERT ON DUPLICATE KEY UPDATE aka upsert? 2021-01-01

- MySQL 5.7参照时间戳生成日期列 2022-01-01

- 从字符串 TSQL 中获取数字 2021-01-01

- 带有WHERE子句的LAG()函数 2022-01-01